You’re probably in the same spot as a lot of Omaha business owners right now. You know AI matters. You’ve seen competitors talk about automation, content systems, chat tools, smarter reporting, and faster internal workflows. But when it’s time to make an actual decision, the path gets blurry fast.

Most companies don’t need “more AI.” They need fewer bottlenecks, cleaner operations, better customer experiences, and a clearer way to measure whether any of this will pay off. That’s why the decision to hire ai consultant shouldn’t start with a tool demo. It should start with the business problem you want solved.

Is It Time to Hire an AI Consultant

If your team keeps saying some version of “we should be doing more with AI,” that’s usually a sign the business has reached the strategy gap. The interest is there, but no one owns the roadmap, the trade-offs, or the implementation choices.

That gap is exactly why AI consulting has moved from a niche service to a mainstream business role. According to Indeed Hiring Lab’s analysis of GenAI consultant demand, the share of Generative AI job postings for management consultant roles rose from 0.2% in January 2024 to 12.4% in January 2025, a more than 60-fold increase. The same analysis notes a pivot from AI development toward practical implementation.

For a small or mid-sized company, that matters. It means the market is no longer asking, “Can AI do anything useful?” It’s asking, “Who can help us apply it to the business we already run?”

What this looks like in Omaha

In Omaha, that usually shows up in familiar ways:

- An e-commerce team wants better product recommendations, faster merchandising support, or less manual catalog work.

- A digital publisher wants to scale research, tagging, on-page optimization, and content workflows without losing editorial control.

- A local service business wants help triaging leads, organizing CRM data, and cutting repetitive admin work.

- A startup wants AI built into a custom web app, but doesn’t want to hire a full internal team too early.

None of those are “AI projects” in the abstract. They’re operating decisions.

Practical rule: If the pain is already costing time, slowing sales, or creating quality issues, it’s usually time to bring in outside help.

The best reason to hire an AI consultant isn’t fear of missing out. It’s that your company has enough process, data, and customer activity for AI to improve the work, but not enough internal bandwidth to sort through vendors, models, compliance concerns, and workflow design on your own.

A good first step is reviewing what AI consulting for small businesses looks like in practice, then deciding whether you need strategy, implementation, or both. That distinction saves a lot of wasted spending.

Signs you’re ready now

Some businesses should wait. Others shouldn’t.

You’re likely ready if:

- Your team repeats manual tasks every day and no one has time to redesign the workflow.

- Your data exists but isn’t usable across systems like your CRM, CMS, analytics stack, or inventory platform.

- You’ve tried AI tools already but got inconsistent output, low adoption, or no measurable business result.

- Leadership wants a plan and your internal team can’t confidently define scope, risk, or ROI.

If that sounds familiar, the issue usually isn’t whether AI belongs in the business. It’s whether you have the right person translating business goals into a realistic rollout.

Define Your Goals Before You Define Your Budget

An Omaha owner sits down to hire AI help and starts with the wrong number. They ask for a budget before they define the job.

That usually leads to a bloated scope, fuzzy proposals, and a consultant who fills the gaps with assumptions. A better starting point is simpler. Name the business problem, the process owner, and the result that would justify the spend.

Start with one business constraint

Choose the issue that is costing money, time, or attention right now.

For some companies, that is a customer service queue that keeps growing. For others, it is slow lead follow-up in the CRM, product content that never gets published on time, or manual review work in finance and operations. In Omaha, I see this often with service businesses, local retailers, multi-location operators, and B2B firms that have decent systems in place but too much handwork between them.

Good AI projects usually begin with a bottleneck the team already feels every week.

Write the goal in plain business language. Cut support response time. Improve lead routing. Reduce manual review hours. Raise quote turnaround speed. Clean up reporting. If a consultant cannot connect the work to one of those outcomes, the project is still too abstract.

Tie the goal to an operational result

AI budgets make sense when the expected result is concrete.

A manufacturer does not need "an AI strategy" as the first deliverable. They may need faster intake on distributor inquiries, cleaner product data for the sales team, or a way to summarize service tickets before they hit account managers. A law firm may want help reviewing recurring document types and routing them faster, which is why role-specific examples such as AI legal assistants are useful. They show how AI fits a business function instead of turning into a generic chatbot experiment.

For a local company, the first win is often narrow and operational. Summarizing inbound leads. Classifying support tickets. Drafting first-pass product copy for review. Pulling common insights from call notes. None of that sounds flashy. It does save hours and reduce inconsistency.

Build a one-page decision brief

Before you talk to candidates, put the project on one page. This step filters out weak consultants fast because strong candidates respond well to clear business constraints.

| Decision area | What to write down |

|---|---|

| Business problem | The workflow, delay, or handoff you want to improve |

| Current process | How the work happens now, including who touches it |

| Desired outcome | The specific operational result you want |

| Systems involved | CRM, CMS, Shopify, analytics, internal tools, spreadsheets |

| Constraints | Budget range, timeline, compliance needs, team capacity |

| Owner | The person responsible for decisions, rollout, and adoption |

This brief also helps you decide whether AI is the right fix. Sometimes the actual problem is messy data, no process owner, or approval steps that slow everything down. A good consultant will say that directly.

If you want a benchmark for what strong firms tend to cover, review this list of AI consulting firms for business strategy and implementation. The useful comparison is not who sounds the most technical. It is who can turn a business problem into a scoped engagement with clear milestones.

Do not start with the tool

Business owners often open with tool names. ChatGPT. Claude. Copilot. A chatbot. An agent.

That is backward.

Tool choice comes after the use case, the workflow, and the success metric are clear. Otherwise, the business starts shaping the problem around whatever the software can do, instead of choosing software that fits the job.

The budget conversation gets easier once the goal is defined well. Scope gets tighter. Proposals get easier to compare. ROI stops sounding theoretical and starts looking like hours saved, faster turnaround, or more revenue from a process that was already under strain.

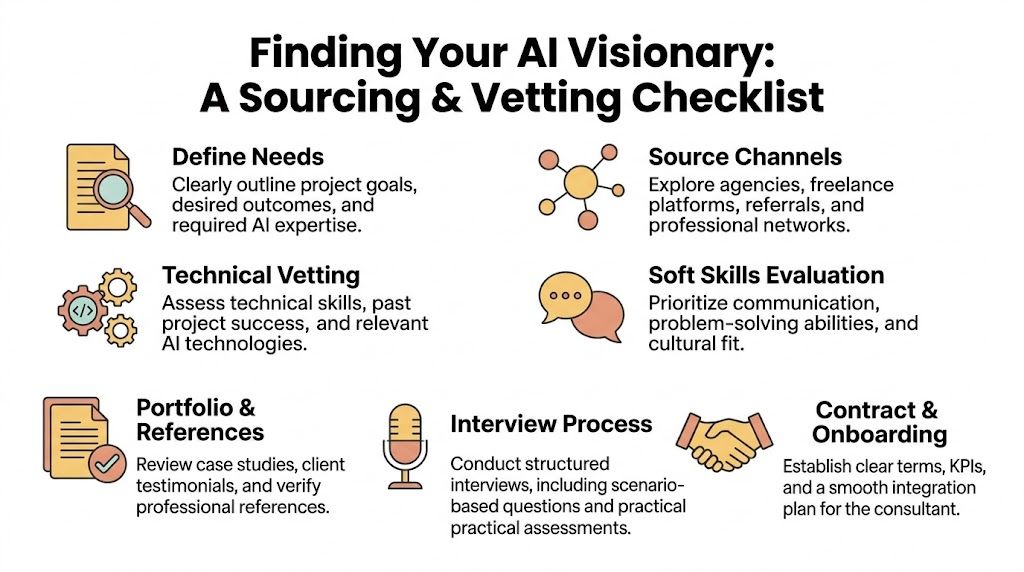

How to Source and Vet Top AI Talent

The market is crowded. Some consultants are excellent operators. Some are skilled technologists who struggle to connect their work to business priorities. Some are mostly repackaging public tools and calling it transformation.

That’s why sourcing matters less than vetting. A referral, a freelance platform, a boutique firm, or a local agency can all work. The question is whether the person can diagnose your business, not just talk fluently about models and prompts.

Where to look first

Each sourcing channel has trade-offs.

- Freelance platforms work well when you have a narrow project, a clear owner, and enough internal structure to manage the engagement.

- Specialized agencies fit better when the work touches strategy, implementation, design, data, and change management at the same time.

- Referrals can be strong, but only if the referral came from a business with similar systems, similar stakes, and similar operating complexity.

- Local partners are useful when leadership alignment, on-site sessions, or cross-functional coordination matter.

If you’re comparing consultant types, this guide on finding the right Machine Learning consulting firms is useful because it frames the search around business fit and delivery capability, not just technical labels.

The first screen should focus on failure risk

Most owners assume the biggest hiring risk is weak technical skill. Often it isn’t. It’s weak diagnosis.

The University of Queensland summary on why AI projects fail notes that AI projects fail at a rate of 80-95%, and identifies poor data quality as the top failure factor. That means your first screening conversation should test whether the consultant knows how to evaluate data readiness, system constraints, and workflow reality before recommending a solution.

A useful benchmark list includes:

- Can they explain what data they need? If they jump to tools before asking about source systems, quality, access, and ownership, that’s a warning sign.

- Do they pressure you toward a big build too early? Mature consultants usually consider whether an existing model or off-the-shelf tool is enough before proposing custom development.

- Can they describe adoption, not just implementation? A tool that no one uses is a failed project with better branding.

Later in your search, it can help to compare firms that blend digital execution with AI strategy. One reference point is this list of AI consulting firms, especially if your project touches SEO, web applications, automation, and business process design together.

Before committing, it also helps to hear how experienced operators think about implementation risk in plain language:

What a strong portfolio actually shows

Most portfolios are padded. Screenshots, buzzwords, architecture diagrams, and tool logos don’t tell you much.

Look instead for evidence of these three things:

- The consultant started with a business process. They can explain the workflow before and after.

- They handled messy reality. Data wasn’t perfect, teams needed training, systems didn’t fit cleanly, and priorities shifted.

- They can explain outcomes without hiding behind jargon. If they can’t describe what changed for the business owner, they probably weren’t close enough to the result.

A real consultant can tell you where a project almost broke, what had to change, and what the client had to do internally for the work to succeed.

That’s the difference between a technician and a partner.

The Right Interview Questions and Red Flags

A typical Omaha interview for an AI consultant goes wrong in a familiar way. The owner asks about tools. The consultant lists platforms, model names, and automations. Thirty minutes later, nobody has discussed margin, staffing pressure, customer response times, or where the work will break inside the business.

A useful interview sounds closer to an operations meeting than a technical screening.

The point is to learn how the consultant makes decisions when the situation is messy. Requirements will change. Data will have gaps. Department heads will be busy. Someone on your team will ask, “Who owns this if it gives a bad answer?” A consultant worth hiring can handle that conversation without hiding behind jargon.

Questions that reveal business judgment

Ask questions that force trade-offs.

-

“What would you need to understand about our business before recommending any AI solution?”

A strong answer starts with revenue goals, workflows, current systems, approval points, and risk. In practice, a good consultant should ask as many questions about your operation as about your software. -

“What would make you tell us not to use AI here?”

This question saves money. Good consultants will tell you when a simpler fix is better, such as a cleaner intake process, better reporting, or standard automation. -

“Tell me about a project where the original plan failed. What changed?”

Look for ownership. The best answers include what went wrong, what assumptions proved false, and how the consultant adjusted scope or sequence. -

“If we can fund only one pilot this quarter, where would you start?”

Their answer should balance payoff and practicality. For example, an Omaha distributor may get faster results from AI-assisted quoting than from a broad customer service chatbot. -

“How do you decide whether our data is usable enough to start?”

Weak answers here usually lead to delays, cleanup costs, or a pilot that looks good in a demo and fails in production. -

“Who on our side has to be involved for this to work?”

Listen for department leads, operators, subject matter experts, and the person who can approve process changes. If they mention only IT, they are probably underestimating adoption risk.

One pattern matters. Strong consultants answer in terms of business constraints, not just technical options.

Ask about governance in plain English

Governance sounds like a big-company concern until a small business has to explain why customer data was exposed, why a model produced inconsistent results, or why no one signed off on the final workflow.

According to Quandary Peak’s summary citing a 2025 Gartner report, SMBs are twice as vulnerable to AI project failures from poor governance. That should come up in the interview. If a consultant cannot explain privacy, approval rules, bias risk, and human review in language a business owner can use, treat that as a warning.

You are not looking for a legal lecture. You are looking for clear operating rules.

| Ask this | What a solid answer sounds like |

|---|---|

| How do you handle sensitive data? | They explain who gets access, where data is stored, what should stay out of public tools, and who approves exceptions |

| How do you reduce bad outputs? | They describe testing, review steps, guardrails, and where a person must check the result before it goes live |

| How do you address bias or unfair outcomes? | They identify the decisions with the most risk, define how outputs will be checked, and explain how issues get corrected |

| Who owns the final decision? | They state clearly that staff remain accountable and AI supports the process rather than replacing judgment |

Red flags that show up fast

Some problems are obvious early.

- They lead with tools instead of your numbers. A consultant selling a stack often has a prebuilt answer before they understand the problem.

- They promise precise results before discovery. Real operators can estimate ranges and likely wins, but they do not claim certainty without seeing the workflow.

- They make simple questions sound complicated. If they cannot explain their approach to your leadership team, they will struggle to get buy-in from managers and frontline staff.

- They skip over internal effort. Useful AI work always needs client time for process review, testing, approvals, and training.

- They wave away risk. That usually means inexperience, not confidence.

I would add one more. Be careful with consultants who treat every business the same. An Omaha manufacturer, healthcare group, law office, and home services company will not share the same risk profile, buying cycle, or operational bottleneck. A strong candidate adjusts the recommendation to the business in front of them.

If a consultant makes AI sound easy, ask what the client had to change internally. The answer will tell you whether they have actually led a rollout or just sold one.

The right candidate makes the work clearer. They do not make it sound magical.

Understanding Pricing Models and Contract Essentials

A lot of Omaha owners ask the same question early. "What should this cost before I even know the full scope?" That is a fair question, especially if you are comparing a freelancer, a specialist consultant, and an agency partner.

The honest answer is that pricing follows business scope, not AI hype. A two-week assessment for a sales team is priced differently than a six-month rollout that touches customer service, operations, and reporting. If a consultant gives you a flat quote before they understand the process, the users, and the systems involved, treat that as a warning sign.

Common pricing models

Three pricing structures show up most often.

| Model | Best For | How it usually works |

|---|---|---|

| Hourly | Short advisory work, audits, second opinions, technical or workflow reviews | You pay for access to senior judgment. This works well when you need answers, not a full build. |

| Project-based | Discovery, pilot programs, workflow redesign, scoped implementation | The consultant prices a defined outcome with milestones, deliverables, and a clear end point. |

| Retainer | Ongoing support across multiple teams or phases | You pay a monthly fee for continued strategy, optimization, training, and oversight. |

As noted earlier, marketplace pricing for AI consultants ranges widely based on experience and scope. That is exactly why the model matters more than the headline rate.

For a first engagement, project pricing is usually the safest option for an SMB. It forces both sides to define the work, set review points, and agree on what "done" means. Hourly work can drift if no one is managing scope. Retainers can make sense later, but they are easier to justify after one workflow has already produced a result.

I have seen this play out with local companies. An Omaha professional services firm may only need ten hours of advisory help to choose the right internal use case and avoid buying the wrong software. A manufacturer on the edge of town might need a fixed-scope pilot tied to quoting, scheduling, or document handling. A healthcare group often needs more contract detail and slower implementation because privacy, approvals, and risk review take time.

What a workable contract should include

The contract does not need to be long. It does need to be specific.

A good statement of work should cover:

-

Scope and exclusions

List the workflows, teams, systems, and decisions included in the engagement. List what is outside scope too. -

Deliverables and milestones

Name the outputs. Discovery summary, process map, pilot recommendation, implementation plan, training session, testing checklist, or reporting dashboard. -

Timeline and review points

Set dates for drafts, feedback, approvals, and final delivery. That keeps the project from stalling between meetings. -

Client responsibilities

Spell out who on your team provides access, approves changes, joins workshops, and signs off on deliverables. -

Data handling and confidentiality

If customer records, financial information, healthcare data, or internal documents are involved, the contract should say exactly how that information is accessed, stored, and used. -

Intellectual property

Clarify ownership of prompts, workflow designs, documentation, automations, code, and training materials. -

Change requests and overages

State how added work gets approved and billed. This one line prevents a lot of avoidable tension. -

Success criteria Tie the engagement to business outcomes you can review, such as time saved, faster turnaround, lower manual workload, or fewer handoff errors.

The weak point in many AI contracts is not price. It is vagueness. If the proposal says "AI strategy support" without naming decisions, deliverables, or owners, you are buying ambiguity.

Pricing mistakes business owners make

The cheapest option is often the most expensive one to clean up.

A low hourly rate can look attractive until the consultant burns time in discovery, hands over vague recommendations, and leaves your staff to sort out execution. On the other side, a large retainer can be wasteful if you only need a narrow pilot with one department.

The better question is simple. What buying structure gives you the clearest path to a business result?

For many Omaha companies, that means starting with a defined project, proving value in one workflow, then deciding whether ongoing support is justified. If you need strategy and execution under one roof, it can help to work with an Omaha digital marketing and development agency that already handles web, automation, and process improvement work alongside AI consulting. Up North Media is one local example with those services in the same engagement.

Specific contracts save money. Clear scope saves more.

Maximizing ROI and Your Local Omaha Advantage

An Omaha business owner approves an AI pilot, the team tests it for two weeks, and early feedback sounds positive. Then a critical question shows up. Did the work save time, reduce errors, or bring in more revenue, and who is responsible for proving it?

That is where ROI is won or lost. A pilot only matters if it holds up inside the way your business already operates. If no one owns the process after launch, the tool gets used inconsistently, review steps get skipped, and the project turns into a line item instead of an improvement.

What ROI tracking should look like

For small and midsize companies, ROI usually shows up first in operations. That means fewer staff hours spent on repetitive work, faster turnaround, cleaner handoffs between teams, and less rework.

The right measurement is tied to one workflow. If AI helps your service team draft replies, track response time, revision rate, and tickets handled per rep. If it supports a finance or underwriting process, track turnaround time, exception rates, and how many cases move through without manual follow-up. Broad language like “AI transformation” does not help an owner decide whether to keep investing.

The 24 Seven hiring guide notes that hybrid freelance-agency models can cut costs by 40% versus a full-time hire and deliver pilots 25% faster, and it points to KPI tracking such as cost savings per AI workflow to measure ROI within 3 to 6 months.

Useful KPI categories include:

- Time saved on recurring tasks

- Cycle time reduction in approvals, support, underwriting, or content production

- Error reduction after review steps are added

- Output capacity without adding headcount

- Revenue impact when AI affects lead response, conversion, retention, or sales follow-up

A good consultant should help you set the baseline before work starts. Otherwise, every result gets argued later.

Why local context helps

Omaha companies tend to make decisions with tighter teams, shorter reporting lines, and less appetite for expensive experiments that drag on for quarters. That changes what good AI consulting looks like. The best fit is often a focused project with a clear owner, a practical rollout, and regular working sessions with the people who will use the process.

Local context matters for another reason. Many Omaha businesses are balancing AI decisions against existing investments in their website, CRM, analytics, paid media, and internal workflows. A recommendation that looks good in a demo can create extra work if it does not fit the rest of the operation. That is why some companies prefer a partner with experience across AI, web, and process execution, such as an Omaha digital marketing agency that also handles automation and development work.

Proximity helps, but fit matters more. You want faster alignment, plain-language recommendations, and a consultant who understands what a regional business can realistically implement this quarter.

The strongest AI engagements are usually the least flashy. They solve one business problem, prove value in numbers your leadership team already trusts, and expand only after the first use case earns its keep.

If you’re evaluating whether to hire ai consultant support for your Omaha business, Up North Media can help you scope the right opportunity, vet the business case, and map a practical path from pilot to measurable ROI.